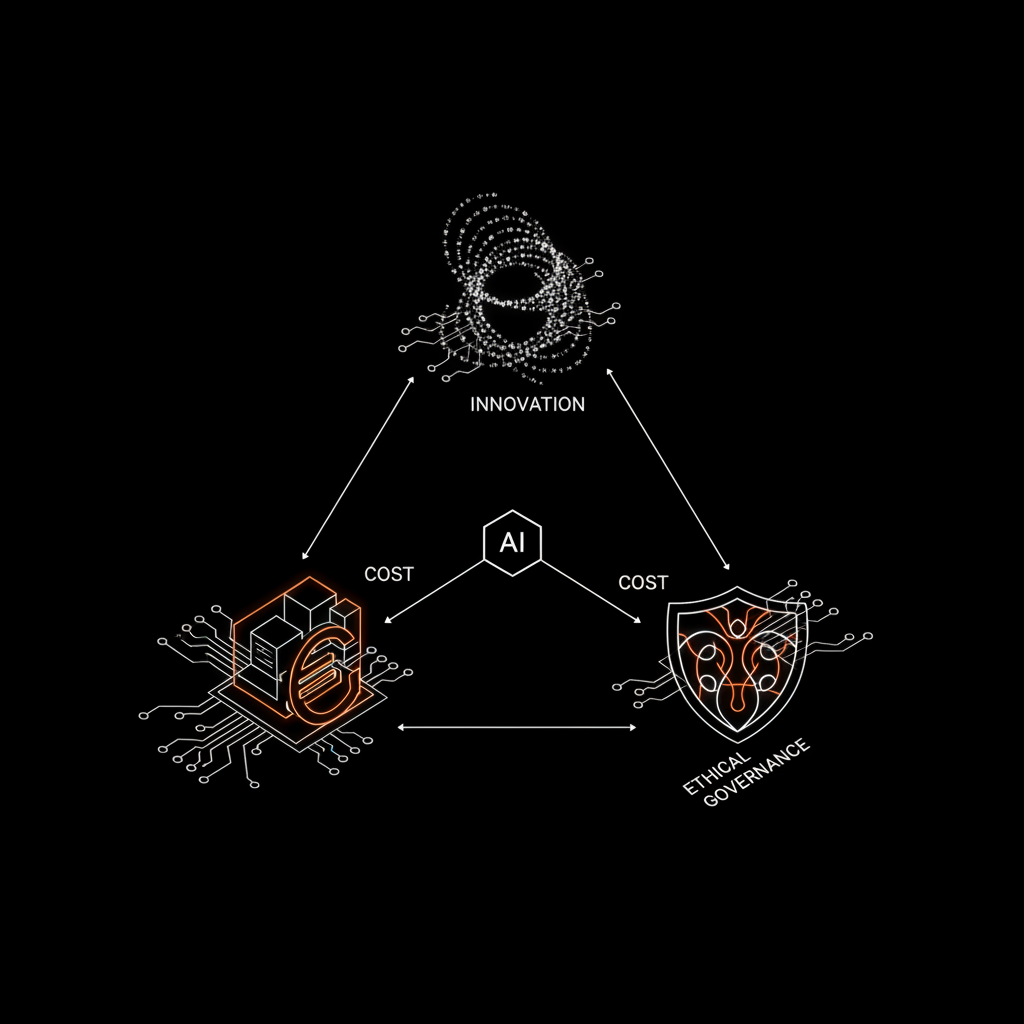

Artificial intelligence promises to radically transform how we build software, accelerating processes and increasing efficiency. However, for many teams and companies, integrating AI brings an array of unexpected challenges: unpredictable costs and intricate ethical and intellectual property issues that demand careful governance.

The Hidden Costs of Generative AI in Software Development

Initial enthusiasm for tools like GitHub Copilot or direct API usage of models such as Anthropic's (Claude) or OpenAI's (GPT) can quickly clash with economic reality. While the unit cost of a single API call or a monthly subscription might seem modest, the scalability of use can rapidly become unsustainable.

Consider, for instance, integrating a Large Language Model (LLM) for code generation, automated documentation, or debugging assistance. Each interaction incurs a cost, and without implementing strategies like caching, prompt optimization, or judicious model selection (perhaps opting for open-source solutions or more efficient models for specific tasks), you risk an explosion in operational costs. We've seen projects where intensive API usage led to monthly bills ten times higher than initial estimates, eroding margins and delaying production.

It's crucial to approach these tools with a cost-engineering mindset: meticulously monitor usage, define precise budgets, and implement fallback or throttling logic. The choice between an advanced model like Claude 3 Opus and a lighter, more performant one for specific tasks, perhaps using retrieval-augmented generation (RAG) or targeted fine-tuning techniques, can make an enormous economic difference.

Intellectual Property and Ethics: The Core Challenge

Beyond economic aspects, even deeper issues emerge concerning intellectual property (IP) and the ethical responsibility of AI-generated code. Who holds the rights to code written by an LLM? And what happens if that code includes fragments derived from copyrighted training data?

The nature of generative models, trained on vast corpora of public and private data, introduces significant legal ambiguity. The risk of code 'contamination'—with snippets that might reference copyrighted works or contain unidentified vulnerabilities—is real. For SMEs and startups investing in custom software development, certainty of code ownership is non-negotiable. Every line of code must be clean, defensible, and free from third-party claims.

This is where the value of 100% human review comes into play. Blindly trusting AI output without thorough verification by experienced developers is an unacceptable gamble. It's not just about fixing functional bugs, but ensuring that the architecture is robust, best practices are adhered to, and every line of code meets ethical and legal standards. It's a cornerstone of our philosophy: AI augments human capabilities; it doesn't replace them in terms of final responsibility.

Another ethical consideration is the potential introduction of biases or security vulnerabilities. A model trained on imperfect data could generate code with unexpected security flaws or discriminatory logic. Human verification is the only effective safeguard against these intrinsic risks.

Tools and Strategies for Intelligent AI Governance

Integrating AI into software development, despite its challenges, is not a path to avoid but rather one to govern intelligently. Here are some operational strategies:

- Rigorous Tool Selection: Evaluate not only technical capabilities but also usage policies, costs, and support. Tools like LangChain can assist in orchestrating AI workflows, while using libraries such as Polars can streamline data analysis and cleaning, which are fundamental for RAG.

- Cost Monitoring and Optimization: Implement dashboards to track API and token usage. Define spending thresholds and automatic alerts. Evaluate self-hosting open-source models when feasible and cost-effective.

- Internal Guidelines: Develop a clear protocol for AI usage in development. This should include directives on intellectual property, code security, and the necessity of human review.

- Continuous Training: Equip teams with the necessary skills to interact effectively with AI tools, understanding their limitations and potential. An 'AI-augmented' developer is far more efficient than one simply 'replaced' by it.

- Code Ownership: For companies like ours, ownership of the generated code is an indispensable principle. We ensure that every client has full ownership and transparency over the developed software, regardless of AI tools used in the process.

AI is a powerful ally, capable of accelerating our development times and making us 3-5x faster than a traditional agency. However, its integration requires a mature and conscious approach. Transforming AI from an unexpected cost into a sustainable competitive advantage depends entirely on our ability to manage its risks, embrace its ethics, and optimize its use with firm and competent human supervision.

Are you ready to implement robust AI governance in your projects, ensuring both innovation and economic security and sustainability?