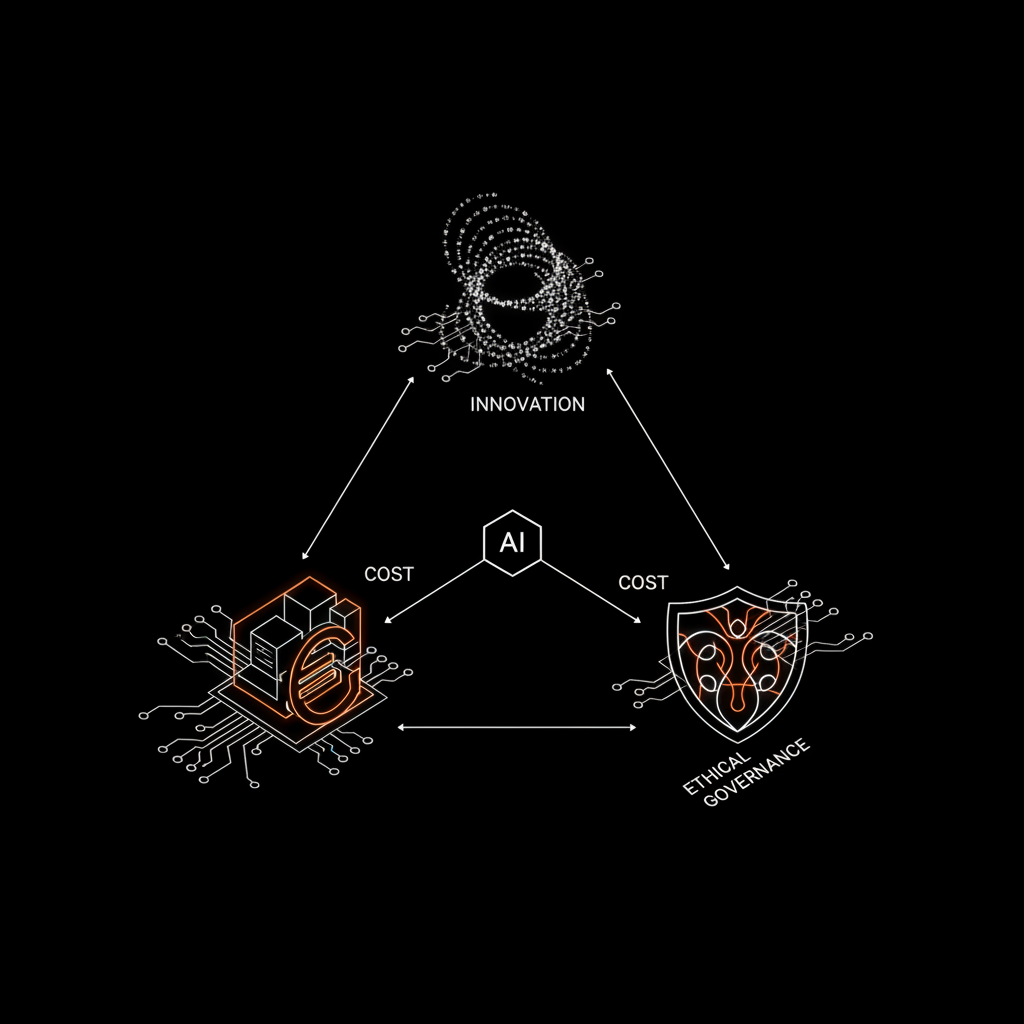

Artificial intelligence is no longer a futuristic promise but a pervasive reality shaping our interactions, decisions, and even the code we write. However, with its increasing autonomy and integration, complex questions arise: Who is responsible when an algorithm makes a mistake? How do we balance innovation with privacy protection? These are not academic dilemmas but urgent challenges every B2B company must proactively address.

The Inevitable Expansion of AI and Its Ethical Shadows

AI adoption, from recommendation systems to autonomous vehicles, has exploded. Tools like GitHub Copilot accelerate development but raise questions about code attribution and intellectual property. While the productivity gain is undeniable, automatic attribution in code commits, without clear indication of human origin or authorship, can lead to legal complexities and ambiguities regarding responsibility.

It's not just about code. Biases in hiring algorithms can perpetuate and amplify existing inequalities, excluding qualified candidates on discriminatory grounds. AI-powered surveillance systems, while powerful for security, raise serious privacy concerns and the risk of personal data misuse. And what about legal liability for an autonomous vehicle causing an accident? The chain of culpability extends from the sensor manufacturer to the engineer who wrote the decision-making algorithm, to the vehicle owner. These scenarios are not science fiction; they are the emerging daily realities demanding clear and robust answers.

Transparency ('explainability') becomes crucial. Companies must be able to explain how their AI systems arrive at specific conclusions, especially in high-risk contexts like finance, medicine, or human resources. Without this capability, trust erodes, and legal disputes become inevitable.

The Regulatory Framework: The AI Act and Beyond

Facing these challenges, legislators are taking action. The European Union, in particular, is at the forefront with the AI Act, an ambitious regulation that aims to govern AI based on its risk level. For Italian SMEs and startups, this is not a minor detail: it means that implementing high-risk AI systems (such as those used for hiring, credit scoring, or critical infrastructure management) will require rigorous compliance checks, impact assessments, and diligent human oversight.

Failure to comply with these regulations can result in substantial fines and severe reputational damage. B2B companies cannot afford to ignore these developments. Instead, they must view them as an opportunity to differentiate themselves by building trust and demonstrating an ethical commitment. Compliance is not an obstacle but a pillar for sustainable innovation and the social acceptance of new technologies.

Building Responsible AI Governance: A B2B Approach

How can B2B companies, especially SMEs and startups, navigate this complex landscape? The answer lies in establishing robust governance frameworks and proactive compliance strategies. Here are some essential steps:

- Risk Assessment: Identify AI systems that fall into the 'high-risk' category according to the AI Act and other relevant regulations. This includes mapping the data used, decisions made, and potential negative impacts.

- Transparency and Explainability: Integrate the principle of 'AI by design' that promotes transparency. This means designing systems capable of explaining their decisions in an understandable manner, avoiding inscrutable 'black boxes.' XAI (Explainable AI) tools and methodologies are increasingly mature.

- Data Quality and Bias Mitigation: Ensure that training datasets are representative, clean, and free from bias. This requires careful data curation and, when necessary, the application of debiasing techniques. Clean data is the foundation of ethical AI.

- Human Oversight: Ensure there is always a human in the loop. Even the most sophisticated algorithms require review. At Logika.studio, we firmly believe in '100% human review' for every solution we implement, ensuring not only technical accuracy but also ethical and regulatory compliance.

- Ownership and Control: Companies must retain full ownership of the code and AI models developed for them. This ensures control, flexibility, and the ability to conduct internal audits and rapid adjustments, a point we consider fundamental and on which we base our collaboration model.

- Regular Audits and Documentation: Implement processes for internal and external audits of AI systems, documenting design decisions, data used, and results. This traceability is vital for compliance and liability management.

AI is an unparalleled growth engine. However, its positive impact is contingent on our ability to manage it ethically and compliantly. Investing in robust AI governance today is not a cost but a pillar for growth and trust in the digital future. Are you ready to build your AI future with the responsibility it deserves?