The evolution of Artificial Intelligence agents is rewriting the rules of software engineering, transforming what was once science fiction into operational reality. It's no longer just about algorithms that predict or classify, but about entities capable of understanding, acting, and even solving complex problems within the development lifecycle. These agents are surpassing critical benchmarks, offering capabilities that push developers to reconsider traditional production models.

The Rise of AI Agents and Workflow Redefinition

Intelligent assistants like GitHub Copilot have already demonstrated the potential of integrating AI into the daily workflow. Their ability to suggest code blocks, complete functions, and even generate tests is not only accelerating development times but also influencing business and software usage models. Once, coding was a purely artisanal process; today, it's an orchestra where AI acts as an expert co-pilot, amplifying the productivity of individual developers.

But the evolution doesn't stop at suggestions. Next-generation AI agents, often built on frameworks like LangChain or systems inspired by Auto-GPT, are designed to operate more autonomously. They can analyze a requirement, break down the problem into sub-tasks, generate code, test it, and, in some cases, even deploy it. This paradigm shift is profound: from simple assistance tools, agents are transforming into proactive actors, opening new frontiers for Italian SMEs seeking efficiency and innovation in custom software.

The Evaluation Challenge: Beyond Traditional Benchmarks

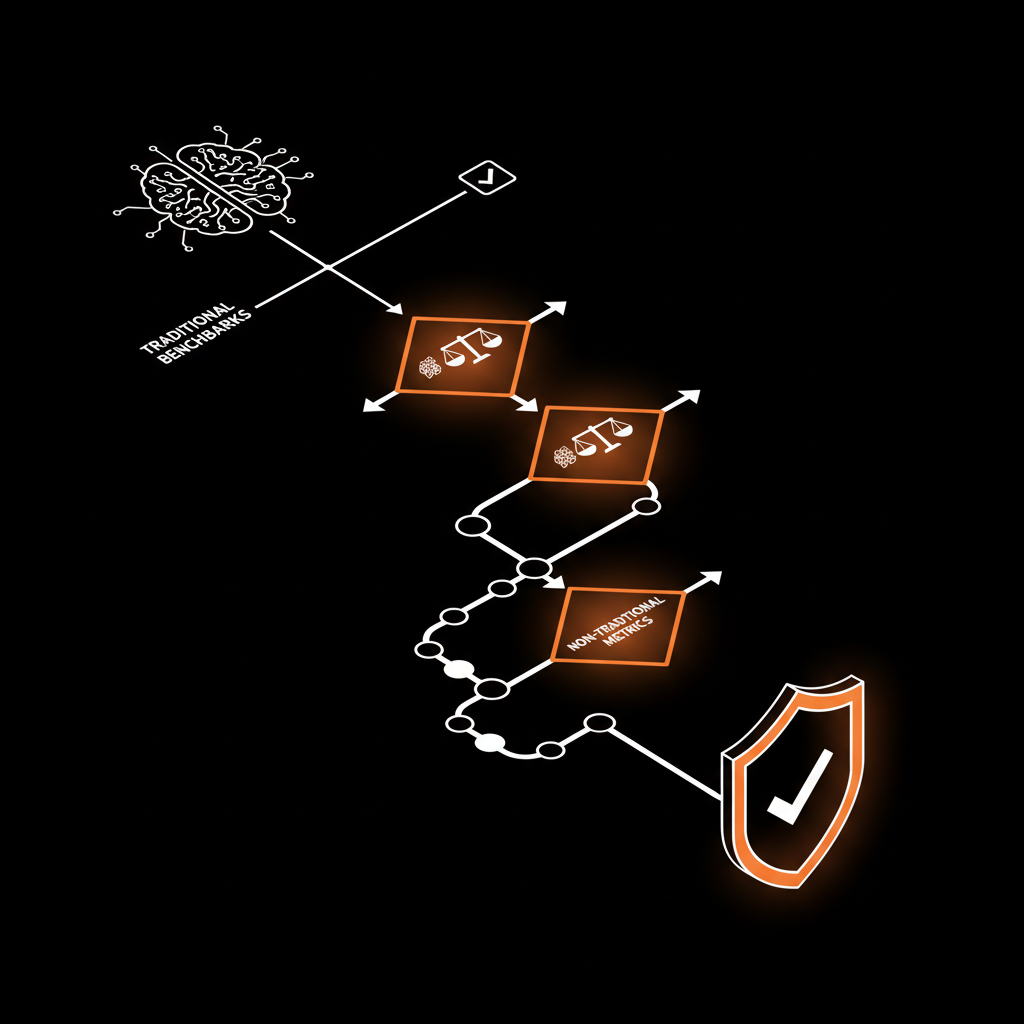

As the capabilities of AI agents grow, the need for robust evaluation methods has become critical. It's no longer enough to measure the accuracy of a language model or its ability to generate coherent text. We need to understand how effective they are at solving real-world software engineering problems. This is where benchmarks like SWE-bench come into play.

SWE-bench is a complex dataset designed to evaluate AI agents' ability to resolve real issues from GitHub repositories, ranging from bug fixing to adding new features. It's not about multiple-choice tests, but about the ability to generate working patches that pass all existing tests and, sometimes, create new ones. Current results, while constantly improving, show that even the most advanced agents achieve success rates still far from human levels. This highlights a fundamental point: while agents can be incredibly fast at proposing solutions, 100% human review remains an indispensable safeguard to ensure quality, security, and adherence to client specifications. At Logika.studio, we firmly believe that speed (we are 3-5× faster than a traditional agency) must always go hand-in-hand with maximum reliability.

Autonomous Agents in Production: Potential Risks and Human Oversight

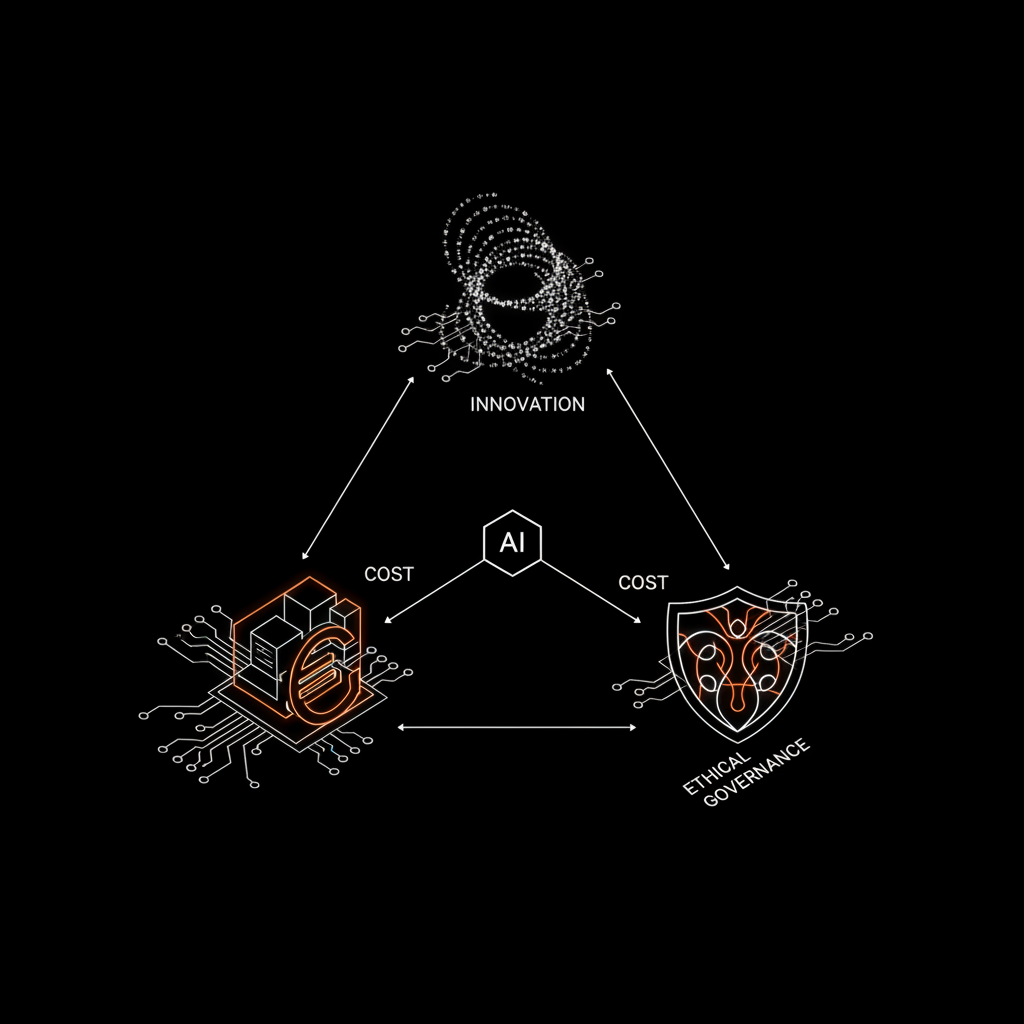

The idea that AI agents can autonomously interact with production systems opens up exciting yet potentially risky scenarios. Imagine an AI agent monitoring an algorithmic trading application, identifying an anomaly, diagnosing the cause, and deploying a corrective patch. The reaction speed would be unparalleled. However, an agent operating without adequate supervision can introduce security vulnerabilities, cause service disruptions, or generate undesirable cascading effects. Security and operational concerns become paramount.

That's why Logika.studio's approach is to leverage the power of 'swarms of AI agents' under the supervision and guidance of a single expert human lead. This model ensures not only the efficiency and speed of the agents but also full code ownership for the client and rigorous human review at every stage. Tools like Polars for data analysis or Claude/Gemini/OpenAI APIs for linguistic interaction are fundamental building blocks, but the true added value lies in orchestrating these tools with intelligence and responsibility, especially when applying AI to SMEs.

The future of software development is undeniably hybrid, a synergy between artificial intelligence and human ingenuity. The challenge isn't to replace developers, but to empower them, freeing them from repetitive tasks and allowing them to focus on complexity and innovation. So, how can we best balance the autonomy of AI agents with the need for human control and the assurance of security in a dynamic production environment?